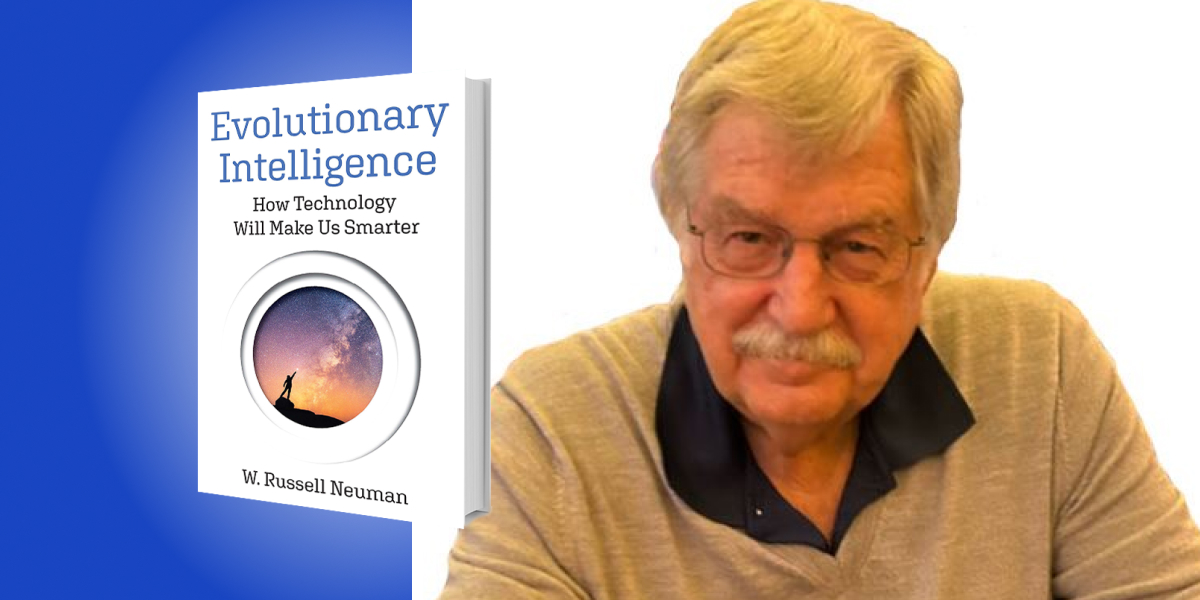

Russ Neuman has studied the social impact of technology at the MIT Media Lab, taught at Harvard and Yale, and worked on technology policy at the White House Office of Science and Technology Policy. His recent books include The Digital Difference: Media Technology and the Theory of Communication Effects. He is currently Professor of Media Technology at NYU.

Below, Russ shares five key insights from his new book, Evolutionary Intelligence: How Technology Will Make Us Smarter. Listen to the audio version—read by Russ himself—in the Next Big Idea App.

1. The popular “Turing Test” (that measures how smart computers are) has it backward.

Turing proposed that if, in a keyboard conversation, you couldn’t tell whether you were messaging with a computer or a real person, then the computer had demonstrated true intelligence. This is a classic human-centric mistake.

Intelligence is the optimal selection of alternative means to a goal. Humans are notoriously and demonstrably bad at that. Various emotions, wishful thinking, and cognitive laziness often get in the way. Why would we want to model optimal machine-based intelligence on ourselves? Here is our chance to use new models of intelligence to compensate for relatively well-understood shortcomings of the evolved human cognitive system. I call it Evolutionary Intelligence.

Human capacities to get things done have co-evolved with the technologies we have invented. The wheel made us more mobile. Machine power made us stronger. Telecommunication gave us communication over great distances. Evolutionary Intelligence will make us smarter.

2. In the early days of automobiles we dubbed them horseless carriages.

Who could imagine a carriage without a horse? Today, many of us still think of computers in a similar historically bounded way. A computer is a thing, a box full of microchips with a screen that you plug into the wall. Computers used to be things. But they have been shrinking, getting more powerful, and connected to an immense digital network. At first, computers sat on our desks, then in our laps, then in our hands as smartphones. What happens next? They disappear! Literally. Computers become part of a seamless, wireless, networked, invisible digital environment that helps us to drive to the right address, pay for a purchase, correct our spelling, and remember a phone number.

“Computers become part of a seamless, wireless, networked, invisible digital environment.”

The key question is do we model machine intelligence on human intelligence, complete with all our aggressive, selfish, competitive, self-serving impulses? Or do we refine a compensatory intelligence to save us from ourselves? Will we succeed at developing Evolutionary Intelligence before it’s too late?

3. Does AI want to kill humans?

A group of distinguished scientists and entrepreneurs recently posted a call for a six-month pause in the development of artificial intelligence technologies because of the dangerous effects runaway development could have on humanity. The pause didn’t happen. I bet they knew it wouldn’t happen. These are very smart folks and I suspect they were just using the dramatic “pause” idea to draw public attention to the importance of their concerns.

So, what is this all about? Among the prominent AI skeptics is Eliezer Yudkowsky, whose cover story in Time magazine reported “that the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die. Not as in ‘maybe possibly some remote chance,’ but as in ‘that is the obvious thing that would happen.’” Strong words. It strikes me as a clear example of projecting human qualities of aggression and competition onto computers.

We have these psychological characteristics and emotions because they were beneficial for survival in our evolutionary history, especially in times of scarcity. We see the same propensities among our animal forebears. But computers did not come into existence through a desperate effort to hunt small game and gather berries. In fact, the equivalent primary directives for computational intelligence are derived from our programming and design. So, no, Siri doesn’t want to kill you.

4. How would computer-assisted Evolutionary Intelligence work?

One example might be intelligent privacy. We probably assume that our digital environment is a sworn enemy of our capacity for personal privacy. But putting computational intelligence to work can reverse that.

Your personal information is a valuable commodity for social media and online marketing giants like Google, Meta/Facebook, Amazon, and X/Twitter. Think about the rough numbers involved. Internet advertising in the U.S. is about $200 billion. The number of active online users is about 200 million. Two hundred billion dollars divided by 200 million people means your personal information is worth about $1000 every year. Why not get a piece of the action for yourself? It’s your data. But don’t be greedy. Offer to split it with the Internet big guys 50-50. Five hundred for you, five hundred for those guys to cover their expenses.

“Your personal information is worth about $1000 every year.”

Tell your personal digital interface that you want complete privacy and to share no personal information and accordingly forfeit any payment. If you don’t care that Google and Amazon know you love chocolate and collect stamps, have your digital interface negotiate a deal every time personal information is requested. You don’t have time to negotiate the details, but your smartphone does. It’s smart and getting smarter, and it works for you.

5. ChatGPT alerted the bureaucrats in Washington that AI could be the next big idea.

Politicians’ first thought was to set up a new federal regulatory commission to make rules and regulations about the creation and use of AI technologies. The lawmakers were candid about their inability to understand what AI is and how it works. But when in doubt, don’t hesitate, just regulate. It strikes me as amusing. Trying to regulate artificial intelligence is like trying to nail chocolate pudding to the wall. The attempt to direct this fast-changing category of mathematical tools through legislation or traditional regulatory mechanisms is unlikely to be successful and is much more likely to have negative, unanticipated consequences.

Artificial intelligence is the application of a set of mathematical algorithms. You can’t regulate math. If a crime is facilitated by using an automobile, a telephone, a hammer, or a knife, the appropriate response is to focus on the criminal and the criminal act—not the regulation or prohibition of tools potentially put to use. Concerns have been raised that AI may be involved in financial crimes, identity theft, unwelcome violations of personal privacy, racial bias, the dissemination of fake news, plagiarism, and physical harm to humans. We have an extensive legal system for identifying and adjudicating such matters.

A new regulatory agency to monitor and control high-tech “hammers and knives” that may be used in criminal activity is ill-advised. The best defense against a bad guy with an AI tool is a good guy with an AI tool.

To listen to the audio version read by author W. Russell Neuman, download the Next Big Idea App today: